Serverless computing is a Cloud-based development and execution method for application code. It...

The key advantages & disadvantages of serverless computing

Serverless computing is a development and execution method that enables developers to focus on developing applications without needing to worry about the back-end infrastructure that will support them. This blog will focus on the key advantages and disadvantages of serverless computing. If you’re looking for more comprehensive information on what serverless computing is, check out our dedicated article: ‘What is serverless computing?’.

Advantages of Serverless Computing

Serverless computing fundamentally changed the world of application development. Here are some of the most essential business advantages of serverless:

Faster deployment times

Serverless computing enables developers to take an application to market, roll out a new feature or release a crucial update with impeccable speed, often in real time. This is because serverless streamlines DevOps cycles and allows developers to integrate, test, deliver and deploy code without having to worry about infrastructure.

Simplified scalability

Serverless infrastructure can be stood up and down with agility that alternative solutions, like on-premise containers, simply cannot compete with. Additional features like auto-scaling can also further enhance scalability by automatically tuning infrastructure to respond to demand increases.

Pay-as-you-go cost

As you only pay for the infrastructure that you are using, serverless computing removes wasted spend on idle servers and unlocks more cost-effective and economical options than renting or purchasing fixed servers can provide. Your organisation can also save on personnel costs by not having to hire infrastructure engineers to manage your hardware.

Simplified backend software development

Function-as-a-Service (FaaS) is a common backend platform that most serverless computing vendors provide which enables developers to easily and quickly execute and scale code without worrying about multithreading or directly handling HTTP requests. However, you will need to ensure that the vendor supports your selected development language.

Disadvantages of Serverless Computing

Serverless computing is not a perfect solution for every application. Here are a few of the drawbacks worth noting:

Cold starts can lead to unacceptable latency

Serverless computing vendors will deploy a dynamic response to user requests and traffic that will automatically allocate infrastructure resources to ensure a consistent user experience as traffic increases but this works both ways. If a serverless function has been dormant for some time, the vendor can shut it down to save resources. This will lead to a cold start when the function is next called upon. A cold start is when a dormant function needs to be restarted fresh before it can execute its function which can lead to increased latency for the user. This latency would be unacceptable for some applications, such as financial trading applications. Until recently this was considered a trade-off of using serverless computing but vendors have begun investing in resolving this issue.

Stable workloads may not be as cost-effective

The pay-as-you-go model coupled with the scalability of serverless computing helps to strike a balance between cost and performance for unpredictable workloads or applications that will need to scale, but serverless architecture for applications with stable, long-running or predictable workloads may find greater cost-effectiveness and performance with traditional architecture.

Debugging and testing can be challenging

The nature of serverless computing and the fact that developers will often lack back-end visibility, can mean that testing and debugging code can be difficult in these cloud environments.

Consider Vendor-lock in

As mentioned above, having your infrastructure managed by a cloud provider unlocks significant benefits to your application development but you will become reliant on that vendor. Changing vendors or using different vendors for several applications also adds additional complexity to navigating as each vendor has its own workflows and features.

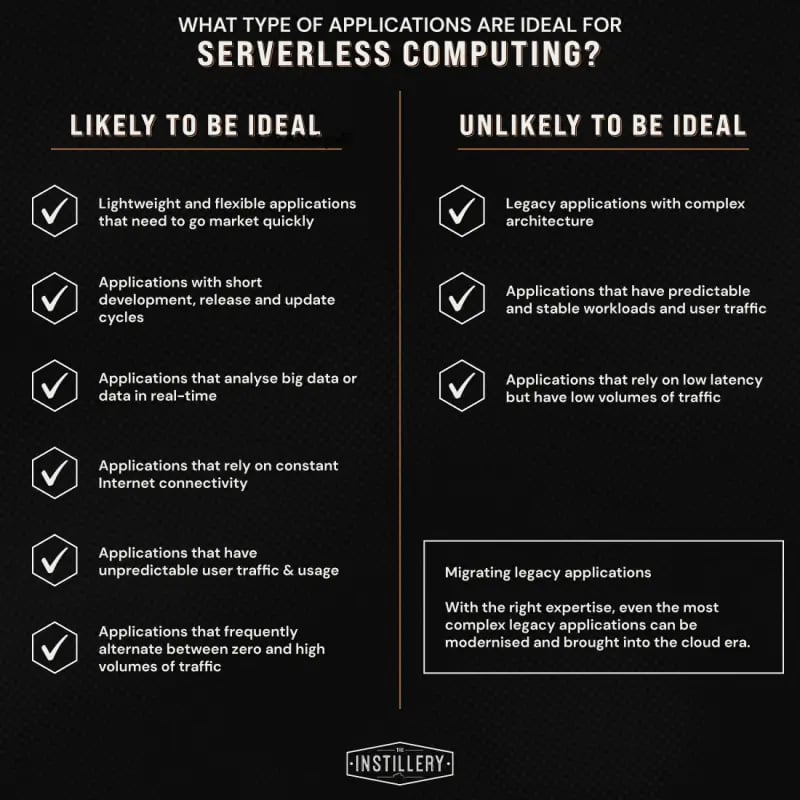

What applications should be serverless?

What applications should use serverless computing?

Summary

Serverless computing is still an evolving technology but has been around long enough for the advantages and disadvantages above to be well documented. It is not a perfect solution for every application and it is essential to review all infrastructure requirements before you commit to an execution model. If you have any questions on the above content or would like to speak to an expert about an upcoming DevOps project, please reach out.